AI Transforming Healthcare: Microsoft Scientist Leads Ethical Development

Seattle, WA - March 17th, 2026 - The promise of Artificial Intelligence in healthcare is rapidly shifting from futuristic concept to tangible reality. Krishna Murthy, lead scientist at Microsoft Health Futures, embodies this transformation, spearheading efforts to not just develop AI diagnostic and treatment tools, but to ensure their ethical and equitable application. While the initial hype surrounding AI in medicine often centered on robotic surgery and automated care, the most impactful advances are proving to be in data analysis and augmented intelligence - tools designed to empower, not replace, human clinicians.

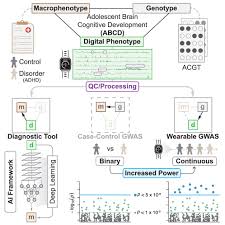

Murthy's work, as outlined in recent interviews and publications, focuses on harnessing the power of AI to navigate the exponentially growing sea of medical data. Hospitals, clinics, and research labs generate petabytes of information daily - from patient histories and genomic sequencing to intricate medical imaging. Traditionally, analyzing this data for meaningful insights has been a time-consuming and resource-intensive process. AI, particularly machine learning algorithms, offers the potential to accelerate this process dramatically, identifying patterns and anomalies that would be virtually impossible for a human to detect in a reasonable timeframe.

One crucial area of focus is medical imaging. AI algorithms can be trained to analyze X-rays, MRIs, CT scans, and other imaging modalities with remarkable accuracy, identifying subtle indicators of disease that might be missed by even the most experienced radiologists. This isn't simply about faster results; it's about earlier detection, which can be critical in treating conditions like cancer, stroke, and heart disease. Automation of tedious aspects of image analysis also frees up radiologists to concentrate on complex cases requiring their nuanced judgment and expertise.

However, Murthy and his team recognize a significant and potentially dangerous pitfall: algorithmic bias. "The biggest challenge isn't building the AI," Murthy stated in a recent panel discussion at the Healthcare Innovation Summit, "it's building fair AI." Historically, medical datasets have suffered from a distinct lack of diversity. Data overwhelmingly represents the experiences of white, male patients, leading to algorithms that perform less accurately when applied to women and people of color. This disparity isn't intentional, but stems from historical inequities in healthcare access and data collection practices.

This bias isn't merely a statistical quirk; it has real-world consequences. An AI system trained primarily on data from one demographic group might misdiagnose conditions in patients from other groups, leading to delayed treatment or inappropriate care. Murthy's team is actively developing techniques to identify and mitigate these biases, including data augmentation (creating synthetic data to balance representation) and adversarial training (challenging the AI to perform equally well across all demographics). They are also advocating for greater transparency in AI development, allowing independent researchers to audit algorithms for bias and fairness.

The concept of 'augmentation,' rather than replacement, is central to Murthy's philosophy. He emphasizes that AI is not intended to usurp the role of doctors, but to enhance their capabilities. "Our goal isn't to create AI doctors," he explains. "It's to create AI that empowers doctors to be better doctors." This means providing clinicians with access to more comprehensive and actionable data, helping them make more informed decisions, and ultimately improving patient outcomes.

Beyond diagnostics, Murthy's team is exploring the potential of AI to predict patient outcomes and personalize treatment plans. By analyzing a patient's medical history, genetic information, lifestyle factors, and real-time data from wearable sensors, AI can identify individuals at high risk of developing certain conditions and tailor interventions accordingly. This proactive approach to healthcare - predicting and preventing disease before it manifests - could revolutionize the way medicine is practiced.

Crucially, Murthy underscores the necessity of interdisciplinary collaboration. Developing responsible AI in healthcare requires a confluence of expertise - not just from engineers and data scientists, but also from clinicians, ethicists, and legal experts. "We need ethicists at the table from the very beginning," Murthy stresses. "We need to proactively consider the societal impact of these technologies and ensure that they are used in a way that benefits everyone, not just a select few." The future of healthcare, according to Murthy, isn't just about technological innovation; it's about ensuring that innovation serves humanity.

Read the Full Time Article at:

https://time.com/7379241/time100-health-toasts-musunuru-burnight-eisenberg/

on: Mon, Mar 16th

by: Digital Trends

on: Tue, Mar 10th

by: Orange County Register

on: Tue, Mar 10th

by: The Baltimore Sun

AI Chatbots Rapidly Transforming Healthcare, Sparking Debate

on: Sun, Mar 08th

by: New Hampshire Union Leader

on: Fri, Mar 06th

by: The Boston Globe

CVS and Google Partner to Revolutionize Pharmacy Services with AI

on: Mon, Mar 02nd

by: Associated Press Finance

on: Fri, Feb 20th

by: Time

Personalized Medicine Revolution: Shifting from Reactive to Proactive Healthcare

on: Mon, Feb 16th

by: The Hans India

on: Mon, Feb 16th

by: The Irish News

on: Mon, Feb 09th

by: WVUE FOX 8 News

AI Revolutionizes Healthcare: A New Era of Personalized Medicine

on: Thu, Feb 05th

by: TheHealthSite

on: Tue, Feb 03rd

by: Forbes